Historical ideas and approaches to AI

The idea of making artificially intelligent beings has been around for centuries

The idea of making artificially intelligent beings has been around for centuries. The ancient Greeks and Chinese had myths about robots, Ancient Egyptian built automatons, old European clocks had mechanical cuckoos, and wind-up walking toys have been around for centuries. Science fiction from Mary Shelley’s Frankenstein to 1950s drive-in mad scientist films to Doctor Who have been about creating artificial beings.

Artificial intelligence has long been based on the idea-- and one that has been questioned in recent years-- that the process of human thinking can be mechanized. Many people throughout history have thought the human mind is essentially a computer working on symbolic logic and one can, at least theoretically, duplicate the human mind and human thinking via manipulation of symbols, such as with computer.

Mathematician and philosopher Gottfried Leibniz (1646-1716) envisioned a symbolic language that would reduce philosophical arguments to mathematical calculations so that philosophical debates could be answered as an accountant calculates finances. The early 20th-century study of formal mathematical logic by mathematicians and philosophers such as Bertrand Russell, Alfred North Whitehead, and David Hilbert also greatly influenced early artificial intelligence work.

With the introduction of computers that manipulated numbers, mathematicians realized they could manipulate symbols and wondered if they could actually create an artificial brain.

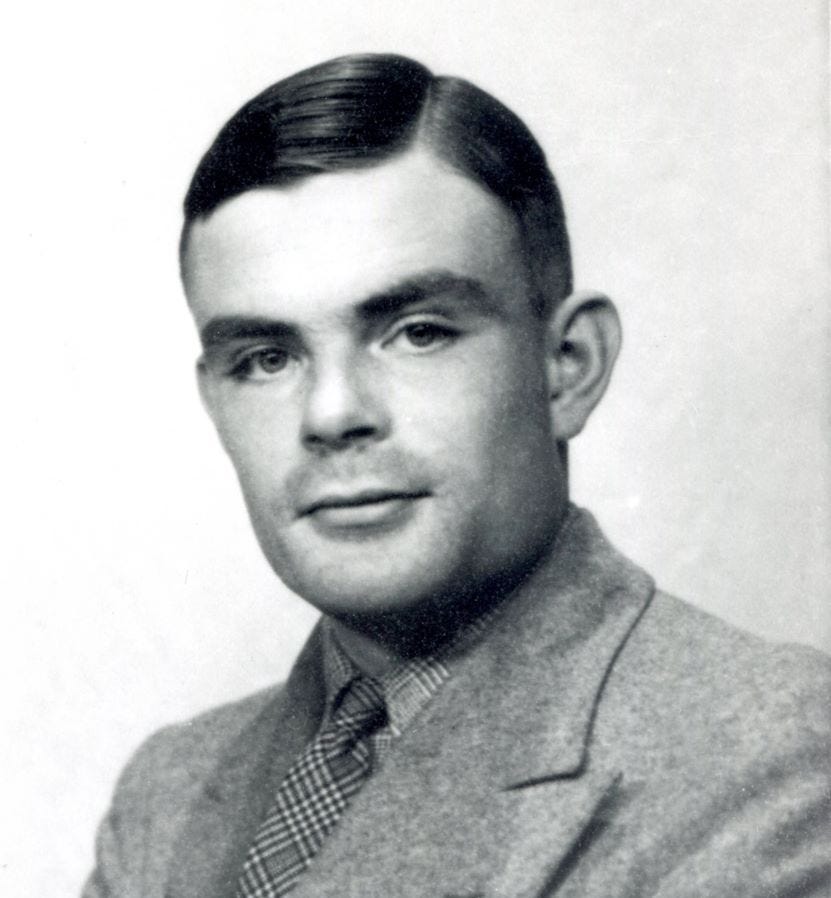

The first basic research in artificial minds was in the 1930s-50s. Scientists found that the human brain worked with electrical impulses, much like digital signals. British mathematician and computer science pioneer Alan Turing theorized that any computation could be described digitally. This all pointed to the possibility that an electronic brain could be made.

Walter Pitts and Warren McCulloch at M.I.T. showed how artificial neural networks could perform logical functions.

Alan Turing wrote a famous 1950 paper speculating about creating machines that could think.

A 1956 conference at Dartmouth College introduced the name artificial intelligence and set its mission. Computer scientist and cognitive scientist John McCarthy wrote that the conference was "to proceed on the basis of the conjecture that every aspect of learning or any other feature of intelligence can in principle be so precisely described that a machine can be made to simulate it."

Since then, computer scientists have been trying a wide variety of techniques and ideas to create artificial intelligence, both weak AI and artificial general intelligence.

Weak AI is a form of artificial intelligence designed to perform a narrow task or a specific set of tasks. It does not possess general intelligence or consciousness.

Artificial general intelligence (AGI), also known as strong AI, means artificial intelligence on the order of a human brain. It would likely need sentience and consciousness and has never been achieved.

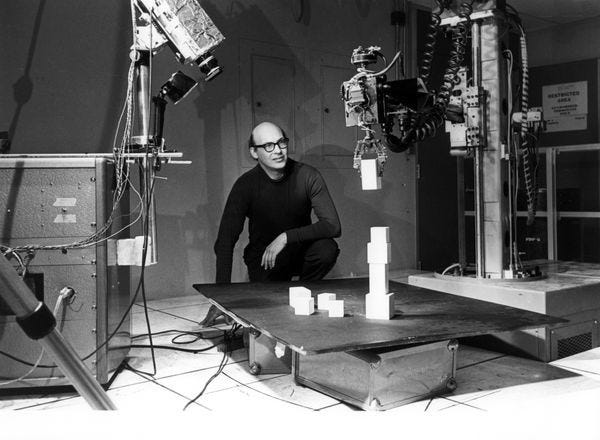

AI pioneers Marvin Minsky and McCarthy used a symbolic AI approach that dominated AI research and practice from the 1950s to the 1980s. This approach involved creating logical, step-by-step rules for the computer to do things in, as the researchers thought, the logical step-by-step way humans do things. Other computer scientists introduced heuristics, or rules of thumb, to simplify the process. In essence, these symbolic AI systems try to represent human knowledge in a logical facts-and-rules way.

Much commercial and government funding was put into this area, and many scientists using these methods made grand pronouncements about how artificial general intelligence was just around the corner. In 1970, M.I.T.’s Marvin Minsky wrote "In three to eight years we will have a machine with the general intelligence of an average human being." However, while this approach did impressive weak AI things, it did not remotely achieve artificial general intelligence, and funding was cut.

While work on artificial general intelligence was often ignored during this lack of funding period, there was much work on many aspects and applications of weak artificial intelligence doing simplified, straightforward tasks.

In the 1990s, large businesses discovered that focused, weak artificial intelligence techniques worked well to analyze large amounts of company data. This was the first time there was commercial value for AI. Investment and financial interest in this area of AI increased greatly.

Research into and development of artificial neural networks brought a prominent different approach to artificial intelligence. A neural network is an attempt to simulate a brain based on the biological neurons of the human brain. It is a ‘bottoms up’ technique in that it analyzes the base data-- finding patterns, relationships, etc-- to come up with results and conclusions. It does the deep thinking information processing you hear about, and has had great success in areas such as image and sound interpretation, personalized services such as via Amazon and Google, search engines, online mapping, analyzing medical data to treat diseases and discover new drugs, and identifying financial fraud.

Today, both symbolic AI and artificial neural network techniques are used. They both have their unique limits and work best in different areas. The symbolic AI made the computer that can beat any person at chess, while the deep thinking artificial neural networks AI has produced the technology that can identify your face and voice on your cell phone.

.

Further reading:

Examining the Intelligence in Artificial Intelligence online paper (pdf)

.