Propaganda, Language, and Emotional Manipulation

Propaganda is often imagined as crude deception made up of obvious lies, authoritarian slogans, and state-controlled broadcasts. However, in practice, it is usually more subtle and more effective. It works not so much through evidence or argument than by shaping perception at the level of feeling, influencing what seems threatening, admirable, shameful, or morally urgent. Once emotion and interpretation are guided, belief often follows with little resistance.

While propaganda is commonly associated with authoritarian regimes, it is not limited to them. The same mechanisms are present in everyday life. Advertising frames products to highlight benefits and downplay drawbacks. Political campaigns present selective narratives that emphasize strengths and ignore weaknesses. Organizations shape how they are perceived through branding and messaging. News and media institutions, particularly those with strong ideological leanings, frame events selectively, emphasizing certain facts and interpretations while minimizing others. The techniques differ in degree and intent, but the underlying psychological mechanisms are the same.

Propaganda as Emotional Steering

Propaganda uses selection, repetition, framing, and emotional cues to influence belief and behavior. It does not need to rely on outright falsehood. More often, it works by selectively presenting reality, emphasizing certain facts while omitting others. By shaping what is noticed and what is ignored, it influences perception before careful evaluation begins.

Emotion affects attention, memory, and judgment before deliberate reasoning takes hold. Fear narrows focus, anger pushes action, and moral outrage reduces complexity. Feelings of belonging and shared purpose strengthen commitment and spread quickly through groups.

This helps explain why emotionally charged messages are so powerful. A fearful public is more likely to accept simple explanations and decisive action. A morally outraged public is more likely to divide the world into good and evil. In both cases, evidence that complicates the story often arrives too late.

During the Red Scare in the United States, fear of communist infiltration led many people to accept accusations with little evidence. In more recent times, viral social media outrage often produces the same pattern, with emotionally satisfying narratives spreading faster than verification.

Why Propaganda Works in Groups

Propaganda is especially effective in groups because groups depend on common narratives to unify members and guide action. Information that supports group identity or signals threat is more likely to be accepted and repeated. Information that challenges the group’s story creates discomfort and social risk, discouraging open criticism.

Fear is especially effective because it activates threat detection and encourages simple interpretations. Pride, grievance, and moral certainty strengthen cohesion and shared perspective in similar ways. Wartime propaganda often frames conflict as a struggle between good and evil, unifying the group and discouraging doubt. The goal is not always to prove a claim true, but to make doubt feel disloyal.

Language as a Tool of Influence

Language shapes interpretation because words do not merely describe reality. They frame it by directing attention before evidence is evaluated.

A policy described as “security” sounds different from one described as “surveillance.” A military action described as “liberation” sounds different from one described as “occupation.” The facts may be the same, but the words guide interpretation.

This framing is often selective rather than false. Descriptions can emphasize real aspects of a situation while ignoring others that would lead to a different conclusion. Because the language is not necessarily inaccurate, it is harder to challenge even when it is misleading.

Labels compress complex judgments into simple terms with moral force. Words such as “patriot,” “traitor,” “extremist,” and “victim” do not just identify, they judge. Euphemisms can blunt moral reactions. Civilian deaths may be described as “collateral damage.” Censorship may be described as “safety.” Torture may be renamed “enhanced interrogation.” Such language creates distance between action and consequence.

A clear example came after the September 11 attacks. Expanded monitoring and data collection were described as measures for “national security” and “public safety,” language that stressed protection. Critics described the same policies as “mass surveillance,” language that stressed intrusion and loss of liberty. The underlying actions were the same, but the framing shaped how they were understood.

Language, Conformity, and the Narrowing of Thought

Shared language is normal in any community, but it can become a mechanism of conformity when some expressions are required and others discouraged. Under these conditions, the range of acceptable speech narrows, and with it the range of acceptable thought.

Authoritarian political, social, and religious movements use language to create ideological conformity. Shared terminology can signal group membership, but it can also function as a way of enforcing belief.

When people are expected to adopt specific words, phrases, or ways of framing issues, they are often being asked to adopt the underlying ideology those terms carry. A group that speaks in a particular language is often expressing a worldview, and requiring others to use that language is a way of reinforcing it.

Language can function as a tool of indoctrination and thought control. George Orwell argued that political systems often use language to limit what people are capable of thinking by structuring and restricting available concepts. When language reduces the range of expression, it reduces the range of thought. The effect is not only to guide communication, but to shape consciousness itself.

Linguist Noam Chomsky argued that control is often maintained not by silencing debate, but by limiting its boundaries. Discussion is allowed, even encouraged, but only within an accepted range. This creates the appearance of open inquiry while keeping deeper assumptions beyond challenge. People argue over details and surface issues and believe they are having a free and open debate, but rarely question the framework itself.

This dynamic does not require formal censorship. It often operates through social pressure, reputational risk, and expectations about what can be said. Certain words, questions, or lines of reasoning become difficult to express without penalty. In these conditions, language becomes a tool not only of communication but of enforcement.

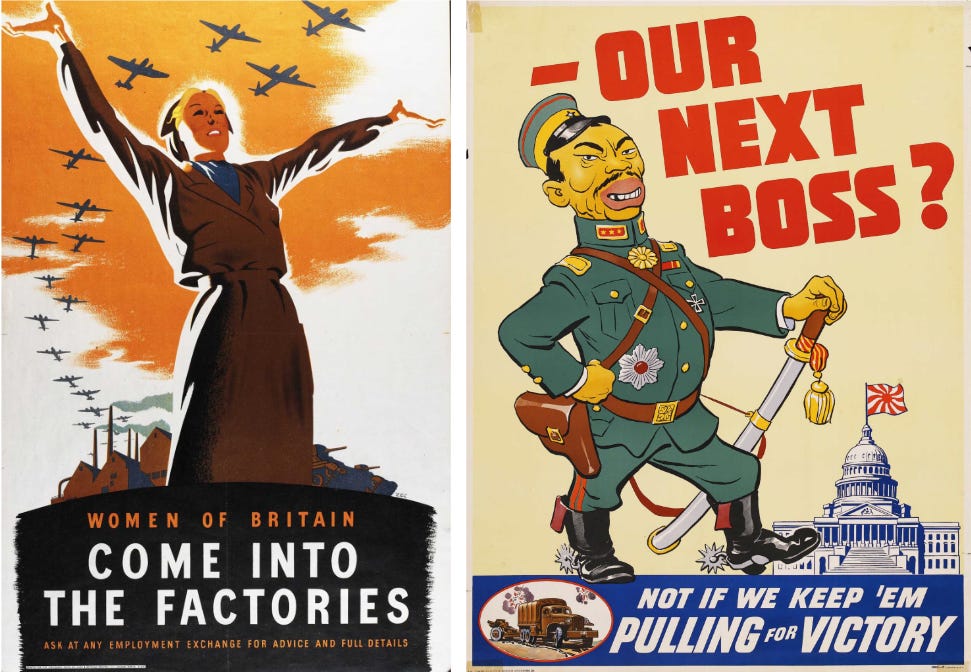

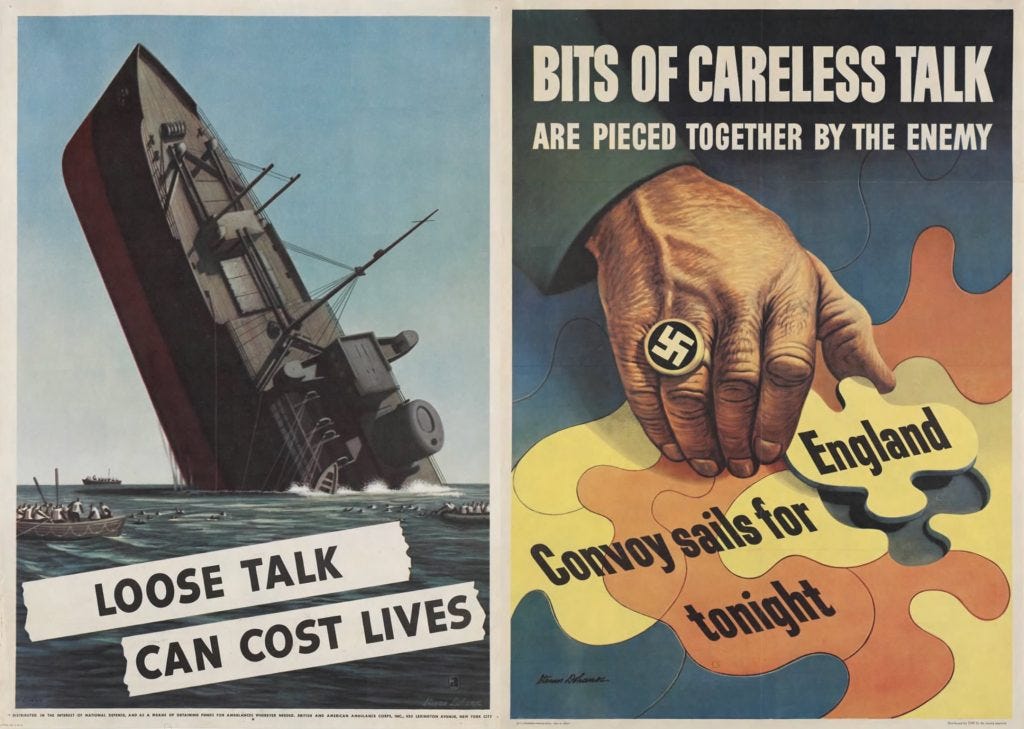

Visual and Symbolic Propaganda

Images often work faster and more directly than words because they trigger emotional reactions without requiring analysis. Photographs, symbols, slogans, flags, and staged events communicate meaning instantly. Repeated imagery builds associations over time, linking people and ideas with feelings such as pride, danger, or legitimacy.

Modern memes are a simple example. They condense complex claims into brief, emotionally loaded images or jokes that spread quickly and signal group identity. Political campaigns rely heavily on symbolic imagery. Candidates are shown in settings that communicate strength and authenticity before any argument is made.

These images are often selective rather than fabricated. A photograph may be real but chosen to highlight one aspect while excluding others. A short video clip may present a genuine moment but remove surrounding context. The result is not necessarily false, but it is incomplete in a way that guides interpretation.

Totalitarian regimes made this technique central, but modern societies use the same psychological mechanisms in more decentralized ways through advertising, campaign media, and viral content.

The Cost of Propaganda

The danger of propaganda is not only that it spreads falsehood. Its deeper danger is that it narrows the range of acceptable thought. When language, imagery, and repeated narratives define what can be said and considered, critical evaluation becomes more difficult. People begin to prioritize social alignment over accuracy, and questioning carries social risk.

Over time, this weakens both individual reasoning and collective understanding. Ideas can become unthinkable not because they have been disproven, but because they are no longer speakable within the accepted framework. That is one of propaganda’s greatest strengths and one of its greatest dangers.

We’re seeing this everywhere now.