Why Accurate Ideas Often Face Early Resistance

The social forces that hinder the acceptance of new ideas

History is filled with people who were eventually proven correct but were initially ignored, ridiculed, and even punished for their ideas. This pattern is often framed as a failure of intelligence and openness in the surrounding community. A deeper explanation lies in social psychology. Being right too early is costly not because the evidence is weak, but because the social environment is not ready to absorb the claim.

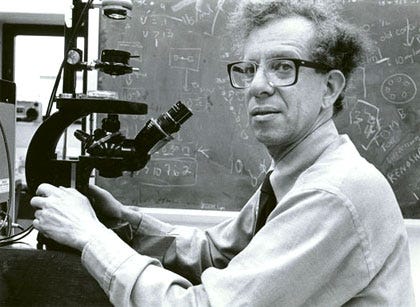

A well-known example is scientist Howard Temin’s work on reverse transcription. In the 1960s, Temin proposed that certain RNA viruses could transfer genetic information back into DNA, a claim that directly challenged the central dogma of molecular biology. Most geneticists viewed the theory as impossible, and Temin was widely ridiculed and his work dismissed. However, subsequent experiments confirmed Temin’s hypothesis, and he was awarded the Nobel Prize in Medicine.

A more recent example comes from Roland Fryer’s empirical work on policing and race. In 2016, Fryer, a young economist at Harvard, analyzed large-scale police data and reported findings that challenged widely held public and academic assumptions, particularly regarding racial disparities in police shootings. The paper drew intense criticism. Some of this focused on methodological questions, but the reaction primarily reflected the politically charged environment surrounding the topic. Fryer faced significant professional and reputational pressure and punishment. However, subsequent studies using expanded datasets and related methods have reached broadly similar conclusions on several key empirical points, and Fryer’s work is now widely cited in the academic literature.

Human groups are organized around cohesion. Shared beliefs reduce conflict and create predictability within the group. When someone introduces a claim that challenges an emerging consensus, members do not evaluate it in a purely evidential vacuum. They register it as social disruption.

One reason early accuracy is penalized is status risk. Established views are often tied to the credibility of respected figures, institutions, and group narratives. A challenge to the prevailing view is perceived, implicitly or explicitly, as a challenge to the people and structures that endorsed it. This raises the social stakes of accepting the new claim. Rejecting the dissenter is easier than revising the shared framework.

Another factor is uncertainty aversion. Novel claims typically appear before a full body of confirming evidence has accumulated. Early evidence is often partial, messy, and ambiguous. From the group’s perspective, caution is not irrational. However, social and psychological pressures often push beyond healthy skepticism into premature dismissal.

Timing also matters. Many correct ideas initially conflict with deeply embedded intuitions and moral narratives. When a claim feels not only unfamiliar but uncomfortable, motivated reasoning activates quickly. Members of the community scrutinize the new idea more harshly than they would a claim aligned with existing beliefs.

The modern reputation environment intensifies this dynamic. In many professional and public settings, people face asymmetric reputational risk. Being wrong alongside the majority is often socially safer than being correct in isolation. As a result, many wait for visible consensus before updating their views, even when early evidence is suggestive.

Independent thinkers face a persistent dilemma. Acting on early evidence can advance knowledge, but it may also carry career, social, and reputational costs. Remaining silent protects standing but slows collective learning. Different personalities resolve this tension differently. Some push forward despite the friction. Others wait until the evidence becomes socially safer to acknowledge.

Communities also engage in retrospective rewriting. Once a new view becomes widely accepted, earlier resistance is often minimized or forgotten. The group narrative shifts to emphasize eventual progress rather than the period of resistance. This creates the comforting but misleading impression that good ideas naturally rise on their merits.

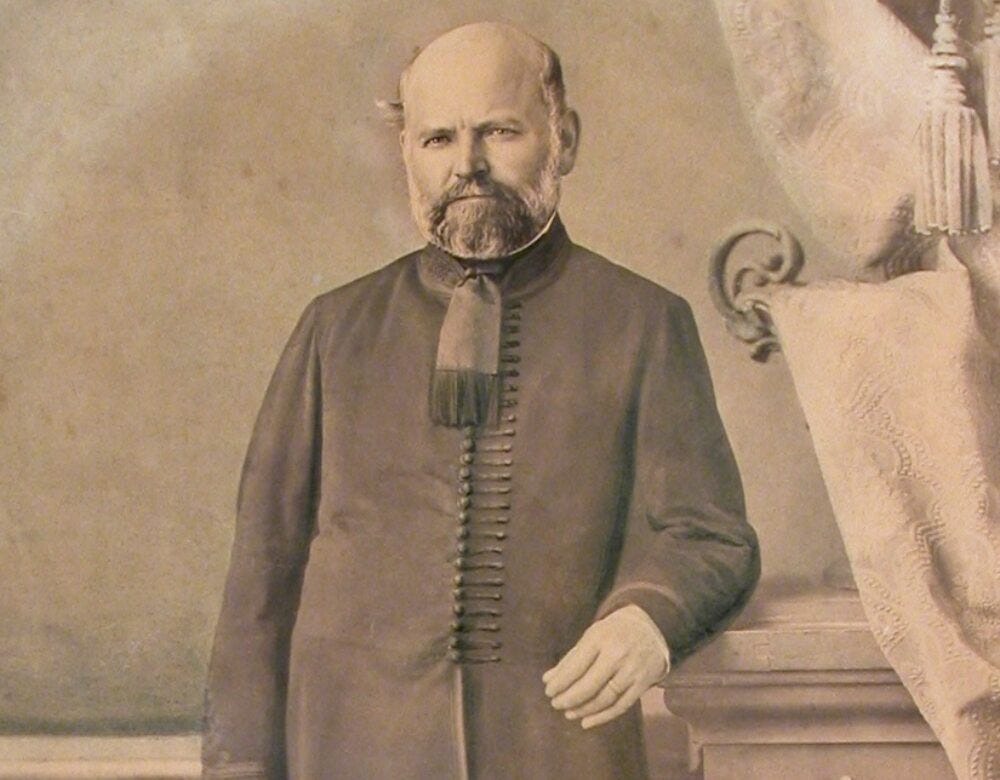

A classic example is Ignaz Semmelweis and handwashing in medicine. In the 1840s, Semmelweis, a physician, presented strong evidence that physician handwashing dramatically reduced deaths from childbed fever. Many in the medical establishment not only dismissed his findings but publicly mocked him. Some physicians were offended by the implication that, as gentlemen, their hands might be unclean. Semmelweis was eventually dismissed from his hospital post and faced sustained harassment from the medical community. He suffered a mental and physical breakdown and died in an asylum. Today, however, hand hygiene is considered a basic and common-sense component of medical practice. Many modern retellings downplay the prolonged resistance to Semmelweis, creating the misleading impression that the medical community quickly recognized and adopted the correct insight.

It is important not to romanticize early dissent. Many contrarian claims are wrong, and skepticism toward novel ideas is warranted. The challenge is calibration. Healthy systems maintain space for careful minority views while still demanding strong evidence. Dysfunctional systems either suppress dissent too aggressively or embrace novelty without sufficient scrutiny.

Organizations that want to improve their error-correction capacity must examine how they treat early-stage disagreement. Do members feel safe raising well-supported but unpopular concerns? Are evaluation standards applied consistently regardless of whether a claim aligns with the dominant narrative? Do leaders model intellectual humility when confronted with credible challenges?

At the individual level, a useful habit is to separate the social reception of a claim from its evidential strength. An idea being unpopular or professionally risky does not by itself indicate whether it is correct. Social friction and empirical weakness are different signals, though they are often conflated in practice.

Human groups naturally favor stability over disruption. This tendency serves many useful functions. However, it also means that being right too early often carries a predictable social cost. Recognizing this pattern does not guarantee that early dissenters are correct. It does remind us to evaluate claims on their evidence rather than on how comfortably they fit the current consensus.

Insightfully, this post illustrates how the togetherness forces of living systems serve survival oriented purposes and also can overwhelm the individuality and thoughtful objectivity that serve survival as well. One way to deal with this paradox is through diversity of thought, welcoming dissident voices into dialogue, and humility about one's convictions.

As the post noted, this way is not about privileging those who are outside the consensus. Rather, it favors the scientific openness to give their views fair and objective evaluation.